Date:2026-03-23 09:27:23

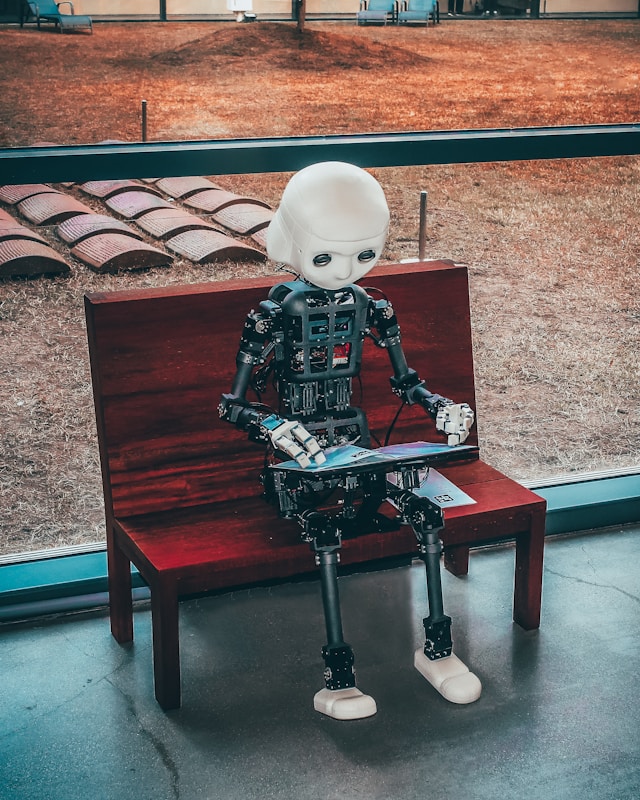

Robots can follow commands, but they still fail at recognizing a mistake before it turns into damage. Researchers at Oklahoma State University are now trying to change that by teaching machines to respond to human instinct in real time.

The team is developing a neuroadaptive control system that allows robots to pick up on signals from the human brain and adjust their actions instantly. Simply put, if a human operator senses something is going wrong, the robot should react before the error escalates.

The system relies on brain-computer interfaces to detect what are known as error-related potentials, or ErrPs.

These signals are triggered almost immediately when a person recognizes a mistake, even before they physically respond.

Using a wearable electroencephalogram cap, the system captures these signals and feeds them into a shared-control robotic setup. Once detected, the robot can slow down, stop, or hand back control within milliseconds.

Catching errors instantly

“In high-stakes environments, like decommissioning a nuclear site, performing deep-sea inspections, we can’t yet turn the keys over entirely to a robot,” said Hemanth Manjunatha. “The world is too unpredictable.”

The approach aims to fix a major flaw in current teleoperation systems. While humans can guide robots remotely, the process is mentally exhausting and often too slow to prevent sudden failures.

“Normally, a robot only knows it has failed when it hits something,” Manjunatha said. “By the time a human corrects it, it might be too late. With brain signals, the robot gets an early warning.”

At the core of the system is the ability to read ErrPs generated in the brain’s anterior cingulate cortex. These signals act like an internal alarm.

“ErrPs are specific electrical patterns generated by your brain, specifically the anterior cingulate cortex, the moment you recognize a mistake,” Manjunatha said.

“The fascinating part is that your brain reacts to an error faster than you can physically move your hand to fix it.”

Brains guiding machines

To make the system practical, the researchers built an adaptive decoding model that learns general brain patterns and then adjusts to individual users.

This reduces long setup times typically required for brain-computer interfaces.

“Everyone’s brain signals are as unique as fingerprints,” Manjunatha said. “If a system only works for one person after hours of setup, it’s not practical.”

Safety rules are enforced using Signal Temporal Logic, which defines strict behavioral limits for the robot. This ensures that even when reacting to human brain signals, the system operates within controlled boundaries.

“Safety is the cornerstone of this project,” Manjunatha said. “The brain signals tell us when something is wrong, but Signal Temporal Logic provides the rulebook.”

The system is being tested using NVIDIA Isaac Lab and Isaac ROS, supported by RTX PRO 6000 GPUs to handle real-time signal processing and simulation.

Beyond industrial use, the technology could extend to healthcare. Future applications include prosthetics and exoskeletons that adjust based on user intent.

“Imagine a prosthetic limb that senses when the user feels it’s moving incorrectly and adjusts itself,” Manjunatha said.